Public service professions have lagged behind the medical profession in using empirical evidence to guide decision making. David Halpern, National Adviser on What Works & CEO of Behavioural Insights Team, explains how the emergence of ‘What Works Centres’ can change this, and outlines the case for ‘radical incrementalism’.

We take it for granted, when a doctor writes a prescription, that there are good grounds to think it might make us better. But what makes us think that, when we drop our kids off at school, that the way they are taught is effective? Or when we report a crime, that the way that that the Criminal Justice System responds is likely to lead to less crime in the future? Over the last four years, a set of institutions have been created to answer these most basic ‘what works?’ questions, and their early results are already causing a stir.

A search for evidence

In the 1960’s and 70’s, the medical establishment was shaken to its core. Realising during his time as a medic during World War II that “there was no real evidence that anything we had to offer [in the treatment of tuberculosis] had any effect”, Dr Archie Cochrane began a restless search to improve the evidence base behind medical practice. Despite contemporary criticisms that this was unnecessary, or even unethical, the results often revealed ineffective, and sometimes even counterproductive, medical practice.

Cochrane’s approach ultimately led to the creation of the National Institute of Health and Care Excellence (NICE) in 1999, and indeed to the Cochrane Collaboration, a global network of collaborators spread across more than 120 countries dedicated to evidence-informed healthcare. His doubts have extended the lives of millions of people across the world and reshaped the character of medicine itself.

But, as Jeremy Heywood asked at his first public speech as Cabinet Secretary: if NICE made sense for medicine, why didn’t we have a ‘NICE’ for all the other public service professions – or even for the civil service itself?

More than 200,000 good empirical studies have been published on the relative effectiveness of alternative medical treatments. Conversely, equivalent studies in welfare, policing, social and economic policy and practice run only into the few hundreds combined. Can we really argue that the life-death outcomes of medical treatments make systematic testing ethically acceptable, but that it is wrong to test the efficacy of welfare or education?

Fortunately, things are starting to change – and fast. NICE is now the most mature of seven independent ‘What Works Centres’, operating across a range of policy areas.

What works in education?

In 2011, the Educational Endowment Foundation (EEF) was created. As Kevan Collins, its CEO, recently explained, the EEF has ‘laid to rest the idea that you cannot do randomised control trials (RCTs) in education’. It has already funded more than 90 large-scale trials – all but 5 of which are RCTs - across more than 4,000 UK schools and involving more than 600,000 children.

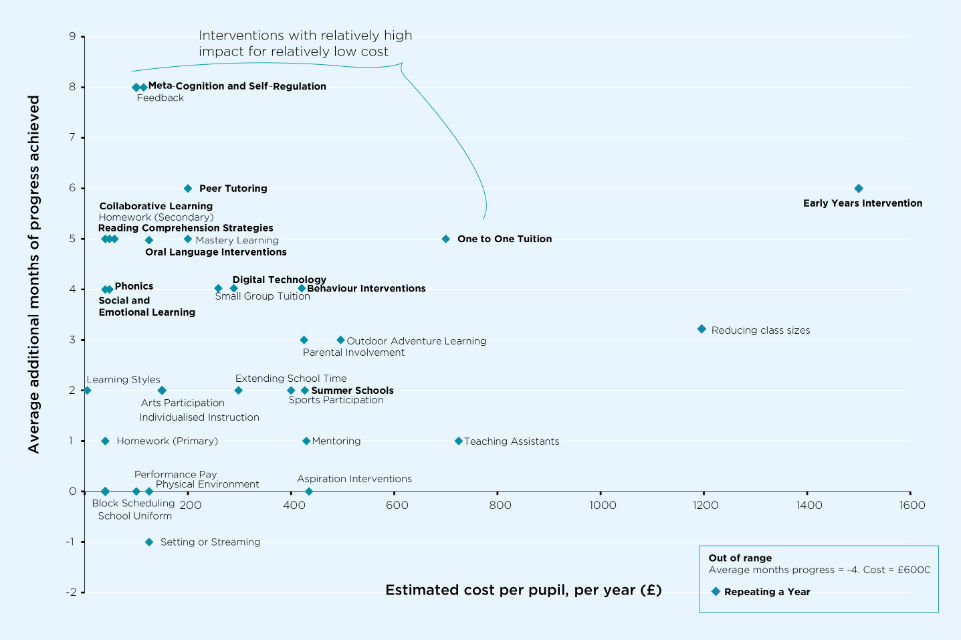

In collaboration with Durham University and the Sutton Trust, the EEF has produced a toolkit aimed at schoolheads, teachers, and parents. This summarises the results of more than 11,000 studies on the effectiveness of education interventions, as well as the EEF’s own world-leading studies (http://educationendowmentfoundation.org.uk/toolkit/).For each educational approach, such as peer-to-peer learning or repeating a year, the toolkit summarises how much difference it makes (measured in months of additional progress); how much it costs (assuming a class of 25); and how confident they are of the effect, on the basis of the evidence so far.

Estimated cost per pupil per year is based on a class size of 25. Text highlighted in bold signifies interventions for which the evidence on effectiveness is extensive or very extensive according to the Toolkit definitions. Source: The Sutton Trust – EEF Teaching and Learning Toolkit and Technical Appendices: http://educationendowmentfoundation.org.uk/toolkit/about-the-toolkit/

Headteachers can therefore base spending decisions on robust evidence about their impact on pupils. For example, it turns out that extra teaching assistants are a relatively expensive and in general not very effective way of boosting performance, whereas small group teaching and peer-to-peer learning are much more cost effective. Repeating a year is expensive and, in general, actually counterproductive.

The growth of What Works Centres

Other What Works Centres are starting to provide similar guidance across most areas of domestic policy and practice. The What Works Centre for Local Economic Growth (LEG) provides advice for Local Enterprise Partnerships. It has concluded, for example, that relatively short skills training is a cost effective way of boosting local employment and growth, especially when local employers are involved. It has also ruffled feathers by highlighting that local areas spending money on major sporting and cultural events is a rather less effective way of boosting growth (though it may be justified on other grounds).

The Early Intervention Foundation is working with 20 Local Authorities to provide advice on the most effective ways of intervening early in life to prevent later costs, from nurturing social and emotional development to reducing domestic violence. Recent work by the EIF with 13 of its ‘Pioneering Places’ revealed that 47% of the interventions being delivered had little or no evidence in an established clearing house to support them. This information is helping the Places to allocate resources towards more cost effective interventions.

The What Works Crime Reduction Centre, hosted with the College of Policing, releases its toolkit shortly. This will include the established but still little known finding that interventions which aim to prevent young people committing crime by taking them to visit prisons are on average ineffective and sometimes actually increase the risk of criminal behaviour. The toolkit is built to guide the decisions facing Police and Crime Commissioners, and other professionals across the Criminal Justice System.

The What Works Network is growing. The Centre for Ageing Better is under development, and a new What Works Centre for Wellbeing was launched at the end of October. The Public Policy Institute for Wales and What Works Scotland have also recently joined the Network as associate members.

Implications for all of us

Often the What Works reviews reveal just how little we know about the cost-effectiveness of many policies and operational practices. Just as Archie Cochrane and his colleagues helped turn medicine into the empirical discipline we recognise today, we need to push the same restless empiricism into every other area of public policy and practice. Experimental studies have shown that we are we all strongly prone to overconfidence; we need to follow in the footsteps of Archie Cochrane, recognising how much we don’t know.

Think of how your policy and operational choices to date could have been aided by experimentation or variation, rather than plumping for your best informed guess. You could have explored different options - varying the intensity of the intervention, trying different routes to delivery, or randomising the roll-out across the country to test its impact.

Building deliberate experimentation into policy from the outset is the single best way that we can strengthen the evidence base on which the What Works approach is built. In a world of digital-by-default, the opportunities for experimentation are greater than ever, and the costs lower. Visit a Google or Amazon webpage and you will almost certainly be viewing one of a number of variants as they endlessly test ‘what works’ best, so-called A/B formatting. took a similar approach when working with Public Health England, testing 8 variations of webpage to encourage more people to sign up as organ donors when arranging their car tax, and more than 20 variations in a quit-smoking site. How many variations are you trying on your web-estate?

In a postscript to what would become a landmark test, Cochrane wondered if he’d been too harsh on his medical colleagues, who were in many ways ahead of other professions:

What other profession encourages publications about its error, and experimental investigations into the effect of their actions? Which magistrate, judge, or headmaster has encouraged RCTs into their ‘therateuptic’ and ‘deterrent’ actions? [Cochrane, 1972, p87]

Fortunately, albeit 40 years later, that is changing. Be a part of it.

‘Radical incrementalism’ is the idea that dramatic improvements can be achieved, and are more likely to be achieved, by systematically testing small variations in everything we do, rather than through dramatic leaps into the dark. For example, the dramatic wins of the British cycling team at the last Olympics are widely attributed to the systematic testing by the team of many variations of the bike design and training schedules. Many of these led to small improvements, but when combined created a winning team. Similarly, many of the dramatic advances in survival rates for cancer over the last 30 years are due more to constant refinements in treatment dosage and combination than to new ‘breakthrough’ drugs. Applying similar ‘radical incrementalism’ to public sector policy and practice, from how we design our websites, to the endless details in jobcentres to business support schemes, we can be pretty confident that each of these incremental improvements can lead to an overall performance that is utterly transformed in its cost-effectiveness and overall impact.

You can read the Centres’ recent report at https://www.gov.uk/government/publications/what-works-evidence-for-decision-makers.

6 comments

Comment by Ellen Veie posted on

Hi Riel, very interesting findings about what we know/don't know about what works, and what we learn from it as policy makers

Ellen

Comment by christine campbell posted on

Interesting stuff

'radical incrementalism'

systematically testing small variations in everything we do, rather than through dramatic leaps into the dark.

Perhaps the 'leap' into UC would be less painfull for all if this practice had been adopted,instead of the 'radical steamroller' approach which seems to have been adopted

Comment by Philip Tierney posted on

Will this mean the end of the 'I believe..' Minister and the rise of the 'Well, contrary to my expectations, the evidence points to....' Minister? Will findings that run against the grain of the Tabloid mindset be followed up? Or will this become another example of the trappings of the scientific method being hijacked to give non-scientific practices and pre-selected outcomes the veneer of intellectual honesty?

Comment by David Halpern posted on

In a meeting a couple of weeks ago, with a disagreement between two major departments about how to proceed on a given issue. One of the Ministers turned to the other - with no direct prompt from me I might add - and said thoughtfully if hesitantly, 'why don't we do this as a Randomised Control Trial?' The other Ministers agreed. Wonderful and important moment. When Ministers and Departments feel comfortable to broker their differences in this way, experimental government is no longer just an idea...

Comment by HENRY BROWN posted on

IT IS GREAT TO EXPERIMENT ON BETTER WAYS OF DOING THINGS.HOWEVER IT IS IMPORTANT THAT EXPERIMENTS DO NOT DEFY LOGIC.HAVING A CONSEQUENCE OF CAUSING PEOPLE TO SUFFER MENTAL HEALTH PROBLEMS.

Comment by Andrew posted on

Surely an experiment which defies logic is going against this principle, as well as any other scientific principle? Small increments of change a la GB cycling team are a positive way to go and mean that logic-defying experiments are a thing of the past?

Secondly, it is surely not the experiment, however illogical, that causes MHIs? It's the putting into practice of illogical processes, whatever their derivation?