In the Civil Service, we are constantly trying to improve our performance and the services that we offer to the public. We often use data or research to help measure and improve our own team or department’s performance, but how would we go about measuring the performance of the Civil Service as a whole? The Analysis and Insight team in the Cabinet Office have been exploring this question.

In this article, we take a look at how existing international measures can give us some insights into how the Civil Service is performing. Reviewing the existing indicators made it clear that there is scope to produce a new measure of performance – one which tries to measure the aspects of the civil service which matter and which we can use to improve our own performance. We are currently working with the OECD to agree on what a new indicator should attempt to measure and think through the practicalities of setting up a new indicator.

A key question is how we establish what we try to measure - how do we know what really matters? We have set out some ideas about what we should try to measure below, but we’d welcome your views on whether we are focusing on the right areas.

Existing measures - the Worldwide Governance Indicators

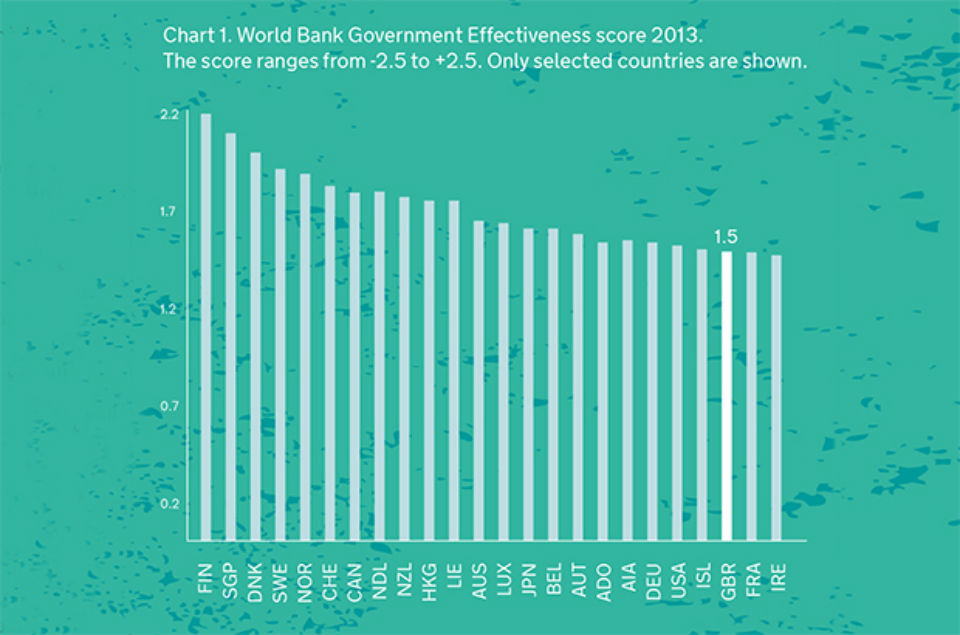

The first indicator we looked at was the Worldwide Governance Indicators (WGI) that are produced by an analytical team funded by the World Bank. The UK ranked 22nd out of 210 countries in the latest (2013) Government Effectiveness measure. We were below the Scandinavian countries and others such as Canada and Australia.

Chart 1. World Bank Government Effectiveness score 2013. The score ranges from -2.5 to +2.5. Only selected countries are shown.

At first glance, this measure appeared to meet our objectives. These were to find an independent measure of Civil Service effectiveness that provided some insights into Civil Service performance and allowed for international comparison. However, we soon realised this measure wasn’t really suitable for what we wanted to measure.

The way this indicator measured government effectiveness didn’t fit well with our idea of what makes the Civil Service effective. The focus appeared to be on the extent to which ‘bureaucracy’ or red tape impinges on businesses. Of course, this is an important aspect of performance. But the Civil Service does so much more than create regulations that the WGI ended up being a very narrow measure of government effectiveness.

Furthermore, one of the reasons we want to measure Civil Service performance is to learn how we can improve it. However, the UK score in the WGI is driven heavily by data provided by two external organisations: EIU and IHS Global Insight. The underlying data they produce is not published by the WGI, nor available without a subscription to these organisations. This lack of transparency makes it difficult to examine whether the assessment of UK performance is accurate and to take action to improve it.

Existing measures - the Bertelsmann Foundation’s Sustainable Government Indicators

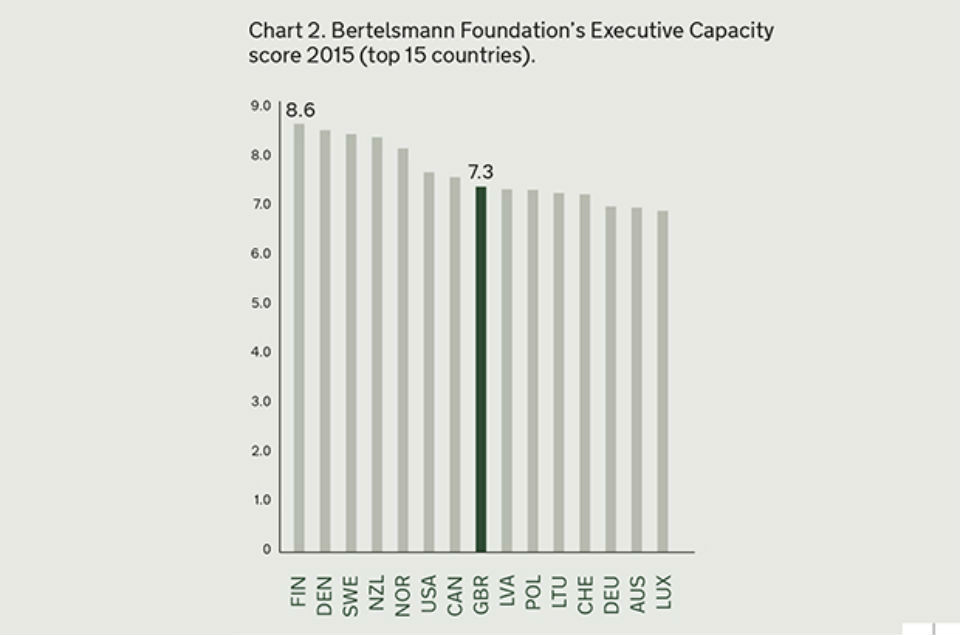

The next measure we explored was the Bertelsmann Foundation’s Sustainable Governance Indicators (SGIs). The UK ranked 8th in the latest (2015) measure of executive capacity, ahead of France and Germany but behind the USA.

Chart 2. Bertelsmann Foundation’s Executive Capacity score 2015 (top 15 countries).

The Executive Capacity dimension of this indicator is more directly focussed on the work of the Civil Service than the WGI Government Effectiveness indicator. It assesses activities that the Civil Service carries out on a daily basis, such as the impact of implementing regulations, the level of policy coordination and how well policies are communicated to the public. The SGIs also include a UK report which details how the UK performs across each of the sub-indicators which make up the Executive Capacity score.

At this stage, the Bertelsmann Foundation’s indicator seemed to be moving out in front in the search for the best international indicator of Civil Service performance. However, as with the WGIs, we also had some concerns with the methodology employed. Firstly, Bertelsmann gives different weightings to the sub-indicators that make up the overall score. For example, they give ‘Self-Monitoring’ more than seven times as much weight as ‘Government Office Expertise’. We are not sure that this is the right judgement.

Secondly, the SGIs employ just two UK country experts to assess the UK in over 60 areas of governance performance – this limits the experts’ capacity to fully understand some of the developments the UK has made in these areas. As a result, the indicators are a limited tool for driving improvements.

What else should be included?

Reviewing these measures of government performance has given us insight into how UK Government performance is currently assessed. Yet neither of these measures provided us with an objective, comprehensive and transparent measure of Civil Service effectiveness.

Our search persuaded us of the value of measuring the performance of the Civil Service as a whole and comparing our performance with our international peers. However, as the existing measures didn’t meet out criteria, we are exploring how we could create a new measure of Civil Service effectiveness. We think the new indicator should attempt to measure what matters to the Civil Service in the 21st century. It should measure areas such as openness, digital, innovation and value for money.

The UK Civil Service is increasingly moving to a more open and more digital way of operating to ensure we can deliver the best services and user experience possible. We are increasingly interested in securing value for money in the way we operate and we aim to provide innovative solutions to complex problems. The extent to which governments use innovative approaches in policy development; the way governments use technology; and the way governments interact with citizens are also important measures of effective governance. Neither the WGI nor the Bertelsmann explicitly captured digital and openness but perhaps a new indicator could?

Other organisations produce indicators that focus solely on these elements and show the UK performing very strongly, particularly on measures related to openness.

For example, the UK ranked top in the Open Knowledge Foundation’s Open Data Index in 2014. This indicator measures whether publicly held data across ten themes are defined as open.

The UK ranked 4th in 2014 in the UN’s e-Participation index which is a broader measure of openness, capturing the use of online services to provide information to citizens and also how governments are engaging citizens to contribute to policy making. The UK also performed well in the UN’s Online Service Index ranking 11th out of 193 countries in 2014. In this indicator, the scope and quality of national governments’ online services are assessed. We think these elements should be captured in a new indicator.

The Worldwide Governance Indicators and the Bertelsmann Foundation also failed to measure innovation or value for money. We recognise that these are very difficult to measure and we are continuing to look for stand-alone indicators that measure government performance in these two areas.

Where next?

Recently we began to test our thinking with others – including through a blog post written by the Department for International Development Permanent Secretary, Mark Lowcock, who is acting as a champion for this work. However, a key question for us was whether other countries would also be interested in developing new indicators to help benchmark their performance and facilitate learning from others. Happily, the answer is yes. We were able to have a number of discussions with other countries during the course of Public Governance week at the OECD in April and there was significant interest in taking this forward. We are now working with the OECD and other interested countries to make progress on this.

If reading this article has inspired you to think about how we should measure our performance, we would welcome views on what should be included in a new measure of Civil Service effectiveness, as well as how it might best be developed and published. What matters to you? And what matters to the citizens we serve?

Read more about the Analysis and Insight Team on our blog

2 comments

Comment by Stuart Holttum posted on

While I support the idea of measuring success with a view to improving, I found it hard not to get the impression from the article that they were discording measures of performance because our scores weren't high enough under that system. Choosing to measure only against the things you think are important are a self-fulfilling prophecy, surely?

I would also suggest that the Civil Service choosing the criteria by which it will maesure its success is getting the wrong end of the stick: after all, we can choose whatever we are best at and measure against that, thus "winning".....

Surely, given that we are the Civil Service - the Service for the People - we should not ourselves be choosing what to measure ourselves against, but the let the people decide what is most important to them? I would hazard a guess that to the average citizen, an "innovative" Civil Service is far less important than one that is honest, responsive, and understanding of their issues?

Selecting our own scoring system is a great way to "win" - while potentially alienating the people we are here to serve.

Comment by Ed Dyson posted on

I'm not convinced by the value of constructing a new indicator. We may end up with something that is not widely adopted (therefore not comparable) and which in a couple of years is superseded by new priorities and becomes defunct. Why not concentrate on data that is already collected - towards the end of the article there seem to be some strong leads for specific themes and the information is already there. Then we can look at the gaps. Value for money is a good one - it's what matters to taxpayers, but how do we measure it? One of the biggest factors must be the effectiveness and efficiency of our decision-making processes - has anyone tried to measure that?