Introduction

Productivity growth is essential. It is the only sustainable way to raise living standards over the long term. Efforts to boost it across the whole economy must include the public sector. Measuring it for government agencies and services, however, is not straightforward. This article considers the measurement of Defence productivity, and outlines how some recent work might be applied more widely.

The central obstacle to measuring productivity in Defence is that we deliberately – and entirely understandably – avoid armed conflict other than as a last resort. Furthermore, we would ideally like to fulfil the Chinese philosopher Sun Tzu’s maxim that “the supreme art of war is to subdue the enemy without fighting”.¹ Deterring aggression by visible military strength is preferable to war. However, we want to ensure that the significant resources² devoted to maintaining military capabilities are spent effectively and deliver value for money.

Recent work by the Ministry of Defence (MOD) has used a variant of the Public Sector Efficiency Group (PSEG)³ conceptual model to benchmark British capabilities against international peers.

Measuring productivity in the public sector

Most attempts to evaluate productivity involve some sort of measurement difficulty, even for a profit-making private enterprise with a simple business model. Quantifying actual – as opposed to contracted – hours worked, and translating data on turnover, purchases, pay and profits into an output measure, means assumptions must be made. Nevertheless, it is generally possible to compute mainstream productivity benchmarks, such as value added per worker.

The most obvious productivity measurement problem for the public sector is that the goods and services that it provides are not sold at market values. Rather, they are often distributed via non-market administrative mechanisms, or implemented directly without payment by users or beneficiaries. This deprives analysts of the output valuation that selling an end product provides. Substitutes, such as willingness-to-pay assessments, are usually inferior to ‘hard’ sales-based data as a basis for value estimation.

Second, the social problems that government aims to tackle are generally more complex than the operations of a profit-seeking business. Providing public services and national infrastructure, or conducting military operations, are long-term engagements. Results may not be apparent for years, even decades, and will then be hard to evaluate against shifting goals and changes in society. The relationship between the delivery of individual outputs and the achievement of desired outcomes is often less clear.

A third factor is that, for many government agencies, including the MOD, the effects they seek depend upon large-scale coordination and integration of disparate activities and systems to produce a combined effect or output that exceeds the sum of its inputs. In a military context, this is known as ‘combined arms integration’. The meshing of specialist ground troops, armoured vehicles, fixed and rotary-wing air power, artillery, engineer mobility and countermobility assistance, and supporting medical, logistical and communication arrangements, is required to defeat a sophisticated adversary.

The productivity of any entity that contributes to a larger whole is inherently hard to assess, unless sub-outputs can be accurately isolated and valued. Although there are limited parallels with advanced manufacturing processes, or large businesses operating across multiple industry sectors, the public sector is relatively more exposed to this measurement issue.

Measuring Defence productivity

Turning to productivity measurement problems specific to Defence, the first major challenge revolves around the contingent nature of its outputs. Defence assets, including manned ships and aircraft, and land forces held at readiness to deploy, are designed to perform their core roles in situations that very rarely occur and which the authorities purposefully avoid. This creates management issues far bigger than just productivity measurement, around motivating employees, appraising performance, and providing continuous, rigorous and realistic training. However, with large parts of the armed forces untested in battle for decades, there is inevitably the unknowable element of how they would have performed in a hypothetical conflict, and consequently of how productive Defence expenditure really is.4

The second measurement problem for Defence is that deterrence of hostile actors and potential enemies is a key outcome. This makes matters worse. Not only does the MOD have the difficulty of assessing its operational effectiveness in a range of possible scenarios, but there is a further unknowable. How do potential adversaries perceive and react to our known military strength, and how would those perceptions and reactions have changed if we had invested in other force structures or weapon systems, or showcased our armed forces’ capabilities differently?

Defence is not alone in facing these productivity measurement challenges, but it is perhaps uniquely affected by the severity and combination of both. The fire service also trains for catastrophic events, which are infrequent. However, it does not seek a deterrent effect in the way that Defence does. The police aim to deter crime, but can practise their skills, and demonstrate the results of their work, on a day-to-day basis.

International benchmarking via the PSEG framework

There is a formidable array of barriers to the objective measurement of Defence productivity. However, the statistical default procedure of equating outputs with inputs5 is unsatisfactory. This rates military forces by their cost or number of people employed, regardless of their operational effectiveness or ability to inhibit hostile action.

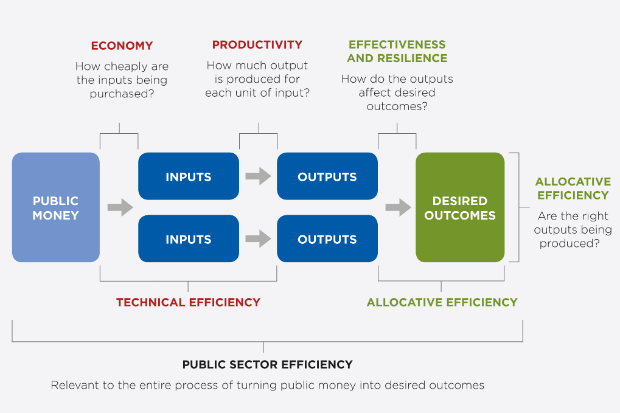

The MOD’s initiative to develop its own productivity assessment methodology has moved forward in stages. The first step was to identify the PSEG conceptual framework, shown in Figure 1, as a suitable starting point.

This model was selected because it defines productivity clearly and in a way compatible with military capability data. It also recognises explicitly the important difference between outputs and outcomes.

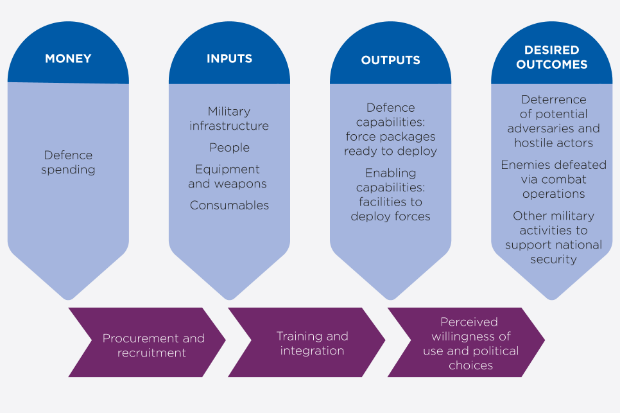

Next, the PSEG model was adapted to create a Defence-bespoke variant, as shown in Figure 2.

Third, we incorporated the measurement issues discussed above. This suggested the sort of analysis that would not work:

- The combination of the ‘contingent output’ and ‘deterrence’ problems makes it difficult to assess the conversion of Defence outputs into outcomes. Attempting to do so would be highly subjective and amount to second-guessing political choices around deployment of the armed forces.

- Assigning costs to inputs and outputs is ruled out. Although some inputs can be accurately costed, measurement issues increase from left to right across the diagram.

- A single Defence-wide productivity metric is impractical, because of the ‘combined arms integration’ issue and difficulty weighting component parts of Defence outputs.

It does, however, offer a way forward:

- It should be feasible to compute meaningful output:input ratios for individual capabilities over a selection of key input and output items. This would have the critical advantage of enabling international comparisons, given that many countries generate and operate major equipment platforms in similar ways.

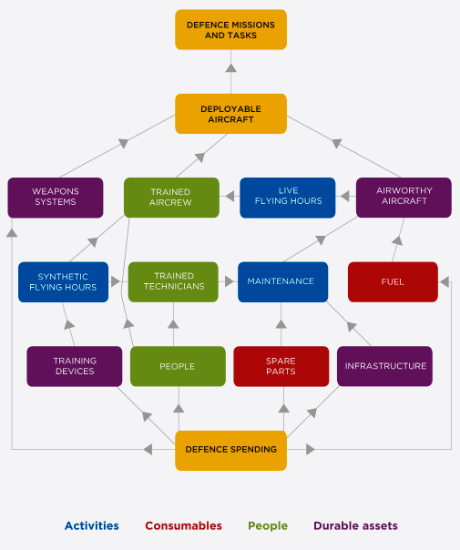

The final step to apply the Defence productivity model to an individual capability was to identify the main inputs and outputs involved. Figure 3 shows this for a specific military aircraft.

The key ratios calculated, and compared to data from three other air forces that operate the aircraft, showed that the UK was relatively productive. The RAF achieved significantly higher flying hours per aircraft, with a lower maintenance burden and more efficient use of infrastructure.

Conclusion

Military deterrence is inherently difficult – trying to persuade potential enemies of our forces’ potency, while denying them opportunities to observe those forces in action. Measuring Defence productivity is correspondingly hard. However, focusing on a carefully chosen set of key output:input ratios for individual assets or capabilities can be the basis for a broader productivity narrative.

The methodology described here helps us to understand productivity trends, and testing the results against international or civilian benchmarks gives an indication of how productive the armed forces are. The MOD started to roll it out last year, prioritising expensive capabilities for which detailed output and input data are readily available.

Defence activity will continue to be stated in the National Accounts, using the output = input convention for the foreseeable future. The approach above is a first step towards addressing the unusual combination of productivity measurement problems that occur in a Defence context. It may be of interest to other public agencies who undertake contingency planning, or for evaluating influencing activity versus direct intervention.

¹ The Art of War, Sun Tzu.

² The MOD budget is currently around £39 billion per year, representing some 5% of total government expenditure, and 2% of gross domestic product (GDP).

³ The cross-government group of analysts created by HM Treasury in 2014 to develop an evidence-based understanding of public sector productivity.

4 The MOD makes extensive use of conflict-orientated modelling and simulation, known as ‘wargaming’, to derive best guesses of how British forces might fare in various scenarios. Significant uncertainty remains, nevertheless, especially for the largest and most serious potential crises.

5 The Office for National Statistics (ONS) uses the volume of inputs as a proxy for output (known as the output = input convention) for government departments and public bodies, including the MOD, whose outputs are not susceptible to direct measurement. This precludes assessment of productivity change.

Recent Comments