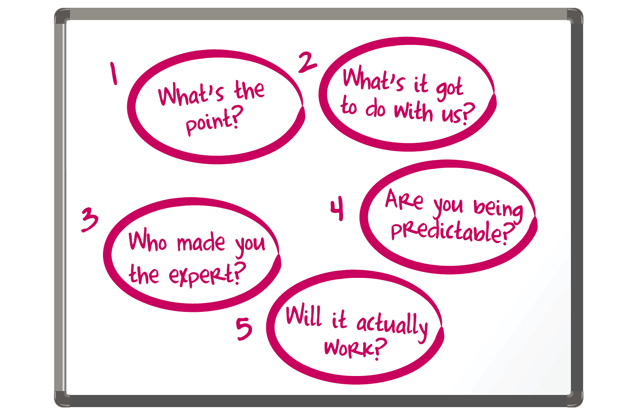

“What’s the point? What’s it got to do with us? Who made me the expert? Is my advice predictable? Will it actually work?” These are questions that policy makers should constantly ask themselves.

Last summer the Department for Education (DfE) undertook a review of the size, shape and role of central government in education and children’s services. One challenge the review identified was how the department could consistently make and deliver ‘world-class’ policy. Research told us that our main stumbling block was not having a shared perception of world-class policy and how to make and deliver it.

In response the department introduced five ‘Policy Tests’, which were launched in January this year. These are clear, challenging questions to transform the way we make and deliver policy in DfE. The Tests set out the standards that we should aspire to and challenge us to ensure that policy is purposeful, necessary, evidence-based, yet radical, creative and deliverable.

Many previous attempts to improve the quality of policy have foundered because they represented linear policy processes and didn’t address the messy realities of policy-making. By focussing on clear questions we have developed a practicable approach to policy-making, one which we hope serves new policy and incremental changes to existing policy and delivery equally well.

Thinking about policy

Successful policies have the power to change people’s lives; flawed policies fail the public and can be incredibly costly. Walk into any high quality bookshop and there will be a plethora of books on different professions, from business to beauty, yet very little on making government policy, despite its importance in shaping peoples’ lives.

Many government departments have developed their own ways of talking about and visualising the policy process. These descriptions often set out the stages of policy development and delivery in the form of ‘policy wheels’ or as linear processes. These approaches have value but we found that many DfE staff thought them too abstract to be of practical use. Research by the Institute for Government (IfG) suggests four reasons why past attempts to reform policy making have fallen short:

-

setting an idealised process too distant from the reality of policy-making

-

offering realistic ambitions but not specifying how they will be achieved in practice

-

reorganising structures to improve policy without embedding a view of what good policy-making looks like

-

neglecting the role of politics and not engaging ministers in reforms.

Creating the tests

We wanted to avoid the pitfalls of previous reforms, build on existing best practice, and develop something rooted in reality that would directly speak to staff and would contest the idea that clear standards could only apply to certain people or circumstances. We wanted no excuses for anyone involved in policy not to apply the Policy Tests.

IfG suggested seven ‘policy fundamentals’ integral to policy development. Similarly, DfE wanted something clear and arresting that encouraged officials to pause and reflect on whether their work met such high standards. We were influenced by the hypothesis of The Checklist Manifesto (a book by the American surgeon and journalist Atul Gawande) that the right kind of checklist liberates rather than stifles professionals:

…under conditions of complexity, not only are checklists a help, they are required for success. There must always be room for judgement, but judgement aided – and even enhanced – by procedure.

From here, the idea of developing a short, comprehensive set of questions began. Paul Kissack, Director of Safeguarding Group, was instrumental in drafting the questions and setting their tone. Staff were consulted throughout the evolution of the Tests, as were ministers, who strongly endorsed the final set.

The Policy Tests

1: “What’s the point? Are you absolutely clear about what the Government wants to achieve?”

This Test stresses the importance of clarifying the Government’s expected outcomes. This may sound obvious, but positions change over time and there are few policy areas where approaches are uncontested. Regular checking of the fundamentals set out in this Test becomes important when developing policy in uncertain situations.

‘What’s the point?’ should be revisited at every policy stage. It means properly defining the problem before leaping to deliver a solution.

2: “What’s it got to with us? Are you absolutely clear what the Government’s role is?”

It is important to take time to define what the Government’s role should be. This Test asks: ‘Does market failure occur in the policy area you are exploring? If it does, is it a failure that can only be fixed through some form of government intervention? Are we ‘nationalising’ a localised problem? Are we confident that what we’re doing couldn’t be done differently and better elsewhere?’

3: “Who made you the expert? Are you confident that you are providing world-leading policy advice based on the very latest expert thinking?”

Ministers expect those advising them to be world experts in their policy area – or know the views of those who are. Exclusive access to data used to mean that civil servants had a monopoly on policy advice. The democratisation of data now allows commentators, practitioners and the public to draw their own conclusions about public policy questions. In this new world civil servants need to have an intimate knowledge of what the leading experts think and what the data tells us.

Alongside traditional methods of collaboration and partnership, DfE are exploring other ways of harnessing cutting-edge expertise, including the option of contestable policy pilots (i.e. projects where ministers seek policy advice from outside the Civil Service). We want to break down the walls surrounding Whitehall and create a more porous environment for innovative policy-making and delivery.

4: “Are you being predictable? Are you confident that you have explored the most radical and creative ideas available in this policy area… including doing nothing?”

Using this Test, we ask if we have been open in generating ideas. ‘Have you inadvertently set boundaries around your thinking like "stakeholders won’t like it"? Are you confident that no one can present an idea you haven’t considered?’

Departments can have favoured ways of delivering policy outcomes, such as legislation, ‘ring-fenced’ funding or guidance. All have their place but should not become default recommendations. This Test provokes us to explore all possibilities to determine what is most appropriate. In a time of restricted funding, we need to contemplate innovative, cost-effective methods, such as behavioural economics, and use new open policy techniques to their fullest.

5: “Will it actually work? Are you confident that your preferred approach can be delivered?"

This may sound like common sense but many ideas which look good on paper may not be feasible in practice. Clarifying the key players in delivering policy outcomes, and understanding where different policy interventions make most impact, is fundamental to policy success.

It is crucial to involve those who will be affected by policy changes. Alongside traditional consultation and collaboration, we are learning from organisations such as the Design Council and Mindlab, which advocate ethnographic research to give new insights into what approaches will actually work.

Applying the Policy Tests

Tom Jeffery, DfE’s Head of Policy Profession and Director General of Children’s Services, launched the Policy Tests in January this year. In order to drive culture change the Tests must be embraced across the department and become the established way of doing things. Early signs are encouraging. Staff have welcomed the no-nonsense challenge in the Tests and find them engaging and provocative, and the Tests are now widely known. One team said that the Tests challenge assumptions: they are phrased as such clear, common-sense questions that if any given policy initiative fails the Tests, everyone knows there is something wrong.

The Tests are now being used within early policy formulation, to review delivery processes and implementation plans, in framing recommendations for ministers, and in reviewing existing policy areas.

Staff are also applying them in more unexpected ways, such as in corporate policies, to challenge other teams’ work, and in financial planning.

|

Policy Tests in Action The Social Work Reform Unit’s recent work with the Institute for Public Policy Research (IPPR), Boston Consulting Group and Absolute Return for Kids (ARK) to launch Frontline, an employment-based programme for accelerated entry to social work for high-flying graduates, was a model of Policy Tests thinking in action. Frontline is a radical departure from the established view of social work education. Consciously challenging emerging thinking against the Tests reinforced the team’s belief that they had a significant, innovative proposition and allowed them to clearly frame their planned reforms to ministers. "The format of the Policy Tests - a set of questions phrased as a deliberate challenge to woolly thinking or to acceptance of the status quo, were really helpful to us in making sure that we were heading in the right direction, rather than going with the flow as we developed the proposals with ARK and IPPR" Graham Archer, Deputy Director, Safeguarding Group |

Long-term commitment

There have been many attempts to reform policy-making and delivery. For the Policy Tests to make a real difference they require embedding across DfE rather than being a centrally-led initiative.

Having senior weight behind the drafting of the Tests, combined with staff involvement, was crucial to the initial positive reaction. Similarly, senior commitment and advocates at all grades and areas of the organisation are essential to changing behaviour.

Other government departments are considering introducing their own versions of the Tests.

Emerging critiques

No new approach will be free from criticism. For example, concern that pressure to develop policy quickly can limit application of the Tests. When using the Tests, we must be realistic about timescales and pragmatic about political imperatives. Ultimately it is ministers that make decisions; civil servants should strive to make our advice the best it can be.

Some people believe that the Tests over-emphasise innovation at the expense of tried-and-tested approaches. The purpose of "is your advice predictable?" is not innovation for its own sake, but to make us examine our default solutions and whether they produce the best results. Taken alongside "who made you the expert?’ this can help us break away from predictability, yet still base advice on evidence.

Colleagues have suggested additional questions essential for good policy-making. For example, have we been suitably steeped in the history and context of our policies? Have we spent enough time on the frontline of policy delivery to truly understand the issues? These questions could sensibly be included within the five Tests and we are pleased with the debate the Tests have generated. We will revise the Tests using feedback from those who have been applying them on an on-going basis.

The goal is world-class policy

Initial reactions have been positive but the proof will be longer term – in a radical improvement of DfE policy. We have laid the foundations, but need to cement culture change by making our policy-making more open, rewarding and disseminating examples of best practice, monitoring the impact of the Tests, and supporting everyone in DfE to develop high-quality policy skills.

The Policy Tests are at the heart of our efforts to make DfE a world-class policy organisation. They provide direct and specific challenge in our work but ultimately their power lies in their ability to spur officials into thinking in a more rigorous and complete way about policy.

Don’t forget to sign up for email alerts from CSQ

Other CSQ articles you may be interested in:

Alex Ellis talks about his experience improving policy making in the FCO

4 comments

Comment by Sara Weller posted on

Great tests. Really simple and pragmatic - in one go they help open up our thinking and focus us on deliverability. How powerful would it be if every department adopted these tomorrow? Isn't this the sort of change that Civil Service Reform is driving towards?

Comment by David Fisk posted on

But 'what made you the expert?' seems to have the wrong answer. Possessing data isn't possessing information or knowledge. If data were the criteria then Think Tanks would be 'experts' as well - and that is hardly ever the case! The questions would have been a bit more credible if they'd been as reflexive 'are you predictable' - 'what makes anyone else think you're an expert?' might have been a good start. It would certainly sorted out the 'when did you stop beating your wife' Q5 - 'Do others who might know what they are talking about, think you're out of your mind (again)?' is my humble redraft for DfE

Comment by Dr R.K.Smith posted on

Like the authors, I too greatly admire the book by Atul Gawande - The Checklist Manifesto. I think it is an excellent idea to develop checklists for policy-making - in this case, for DfE policy making.

Based on my initial review, I recommend you consider adding three more questions that I believe to be pretty fundamental. Two of these focus on the implementation aspects of policy where, as we know from countless analyses, many of the ‘wicked’ issues arise.

Q1. How far is the policy informed by evidence? What were the key knowledge gaps?

This is intended to promote the use of evidence while recognizing that evidence is often wanting and never the sole shaper of policy.

Q2. What approaches and techniques are you using to maximize learning during introduction and roll out?

This is the ‘hook’ that tests how far the policy will be introduced using the approaches and techniques of true experimentation. I suggest it is important to avoid two common risks. One is that people fail to consider the power of simple techniques like piloting. The other is that programmes are described as ‘innovative’ or ‘experimental’ to convey a positive spin but fail to use the approaches that the terms demand.

Q3. Can you show me a true “project plan” for implementation?

Here I’m not referring not to just a list of targets and milestones but something developed properly using ‘project management’ expertise. This would not be a ‘back room’ activity to just produce a Gantt chart to append to a report. Instead it would involve a great deal of engagement. I am conscious that traditionally, this activity may have been considered to be subordinate to policy-making or something that should ‘just happen’. I recommend including this question as the nudge to force policy makers to address such things and cause bridges to be built with people who understand the realities of implementation.

Comment by Adam Cooper posted on

"Who made you the expert?" is an interesting question: policy officials who have been working in an area for a long time (and by long I mean longer than the 3 years standard for most policy officials these days) can be deemed an expert in a different way to an citizen, academic or industry expert. Often the academic or industry expert is acting on their own interest, or with a lack of appreciation for what policy (in general) is for. Hence the expertise is something that emerges between these different actors. And of course is something that was happening when I was a social researcher in government (including in DfES as it was) in 2002-2011 as much as it is today. So the implicit criticism in this (and the associated 'open policy making' that it wasn't happening is misleading. There are enormous questions about deeming expertise and balancing the public good against private interest. It's something we are tackling directly at UCL with our new MPA on Science/Engineering and Public Policy and we're using this blog in class today!

Overall, this is a decent enough list for policy development - probably another list is needed for policy delivery. And don't get me started on strategic policy planning... 🙂